The Creature Server is a fairly complex soft real time application that is the heart of my animatronics control network. It’s written in C++ and works on macOS and Linux, but the primary target is Linux.

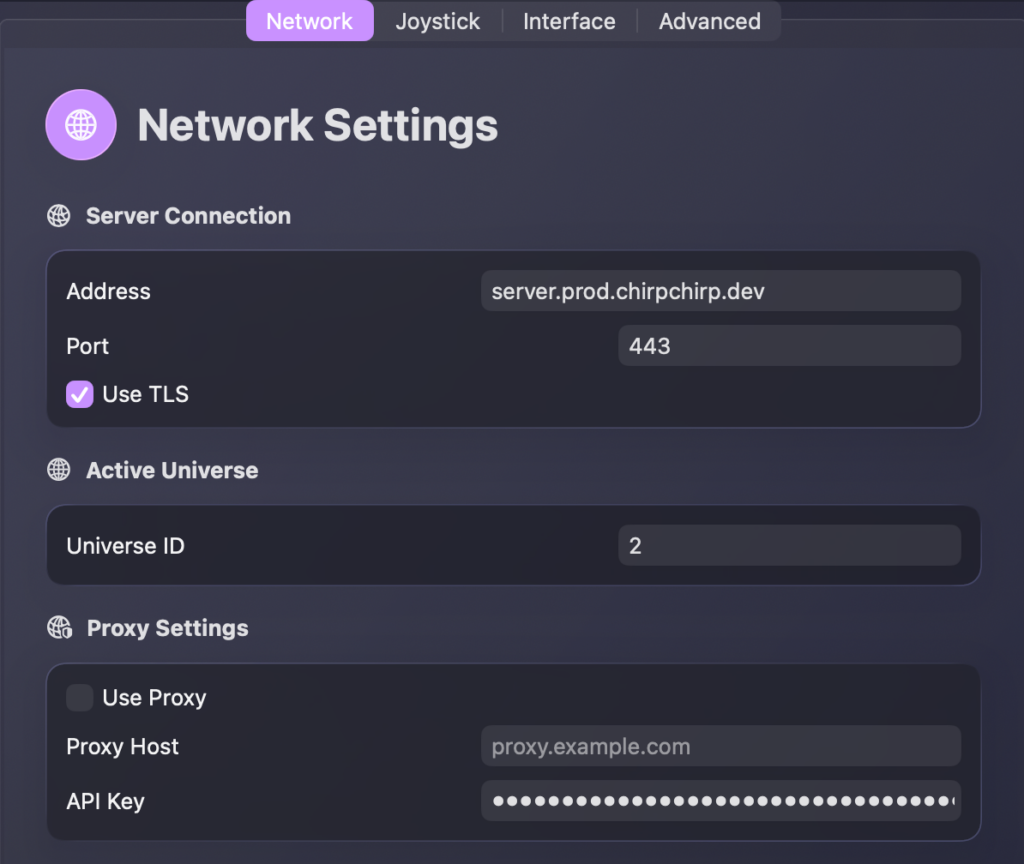

The Creature Console and server communicate over a RESTful API and a websocket. Because moving animatronics is a real time operation, it’s not designed to traverse anything other than a local LAN. I only use Ethernet to keep the latency low and jitter to a minimum. While the primary use is on a LAN, I do have a proxy server (the RESTful API and the WebSocket) on my network that exposes it to the Internet, but it’s locked behind an API key.

The server itself is stateless. All state is maintained in the database (MongoDB).

Event Loop

The heart of the creature server is an event loop. Each frame is 1ms. (1000Hz)

It maintains an event queue internally, and on each frame it looks in the queue to see if there’s work to do. If there is, it performs that work. If there’s not, it sleeps and waits for the next frame.

When an animation is scheduled, each frame of animation is put into the queue with the spacing that the animation was recorded at (usually 50Hz, or 20ms per frame).

This makes the creature server soft real-time, since it’s using the wall clock as the pacing, but overrunning a frame only results in time being dilated, not a crash or a pre-emption of the work. It does not make sense to preempt the work, since it would leave the creature in an odd state. It’s better for time to dilate slightly instead.

Idle Animations

A creature should never be sitting still. That’s the whole point of idle animations — they keep every creature moving at all times, even when nobody is interacting with them. A still animatronic looks broken. A gently moving one looks alive.

Each creature has a list of idle_animation_ids in its definition file. These are short animations I’ve recorded with the joystick — subtle movements like shifting weight, looking around, or preening. The server continuously plays through these in a shuffled order, and it tracks which one played last so you don’t get the same animation twice in a row.

Interruption and Resumption

The clever part is how idle loops interact with everything else. When something more interesting needs to happen — an ad-hoc animation, a streaming session, a playlist — the idle loop for that creature gets interrupted. The server cancels the idle session and the new activity takes over immediately.

When the activity finishes, the server automatically restarts the idle loop. This happens in the playback teardown: once an animation completes and the creature doesn’t have another session queued up, idle playback kicks back in. The creature never notices the gap because the transition from “doing something” back to “idling” is seamless.

This gets tricky when multiple creatures share a universe. If Beaky starts talking, her idle loop needs to stop, but Mango’s idle loop on the same universe should keep going. The session manager handles this by selectively interrupting only the idle sessions that overlap with the new activity, leaving the others alone.

Scheduling

Idle animations are scheduled in the event loop just like any other animation — there’s nothing special about them from the event loop’s perspective. They’re decoded into DMX frames, queued up, and played back one frame at a time. When one finishes, the server picks the next one from the shuffled list and schedules it. The result is a creature that’s always moving in a natural, varied way without any manual intervention.

Which animations go into the idle pool is defined in the creature’s definition file, and I can start and stop idle loops for individual creatures from the Creature Console. Playlists take over when they’re running and hand back to idle when they’re done.

Communication

The network communication is pretty complex, so rather than explain it here, I’ve dedicated an entire page to it.

Voice Generation

All voice generation is done via ElevenLabs. Each creature has their voice settings recorded in their Creature Definition file, and the server uses it when calling out to the ElevenLabs API.

When generating speech, the server calls ElevenLabs’ streaming endpoint with timestamps enabled. This gives back not just the audio, but character-level alignment data that tells the server exactly when each character is being spoken. This is what makes the new lip sync system possible.

Ad-Hoc Animations

One of the things I’m the most proud of is the ability to create animations on the fly just by typing some text. I use this constantly — Mango sits behind my desk at work, and I use the mini console to make him chime in during Zoom calls.

When the server receives an ad-hoc animation request, it does a surprising amount of work behind the scenes:

- Calls ElevenLabs to generate the speech audio using the creature’s voice settings

- Generates lip sync data from the alignment timestamps that come back (see Lip Sync below)

- Picks a random body animation from the creature’s

speech_loop_animation_ids— these are short loops of natural-looking movement recorded with the joystick - Blends the lip sync mouth data into the body animation frame by frame, replacing the mouth channel while keeping the rest of the body moving naturally

- Saves the finished animation to a TTL collection in MongoDB (they auto-expire after 24 hours since there’s rarely a need to replay them)

- Plays it on the creature

The whole thing runs as a background job, so the API returns immediately with a job ID. The Console shows the job progress and the creature starts talking a few seconds later.

Play vs Prepare

There are actually two modes for ad-hoc animations. “Play” creates the animation and immediately interrupts whatever the creature is doing to play it. “Prepare” creates the animation but holds it — you trigger playback manually when the timing is right.

The prepare mode exists because timing is the most important thing in comedy. If Mango is going to respond to something someone said in a meeting, I want exact control of when he starts talking. So I’ll prepare the animation ahead of time and hit play at just the right moment. It makes the reactions feel spontaneous even though I queued them up.

Lip Sync

The lip sync system has been completely redone. I used to use Rhubarb Lip Sync, which worked by analyzing an audio file after the fact to figure out what mouth shapes to use. It worked, but it was slow and had to process the entire audio file before lip sync data was available.

The new system takes two different approaches depending on where the audio is coming from:

ElevenLabs Path

When the server generates speech via ElevenLabs, it requests character-level timing data along with the audio. The server then reconstructs words from the character sequences, looks up the phonemes for each word in the CMU Pronouncing Dictionary, and maps those phonemes to viseme mouth shapes (using the same A through F letter system that Rhubarb used). This all happens as the audio streams in, so the lip sync data is ready the moment the audio is.

Whisper Path

For audio that didn’t come from ElevenLabs — like sounds uploaded manually — the server uses whisper.cpp to transcribe the audio and extract word-level timestamps. Those timestamps feed into the same CMU dictionary lookup and viseme mapping pipeline. The result is the same lip sync data, just derived from a different source.

Both paths produce Rhubarb-compatible viseme data, so the rest of the animation system doesn’t need to know or care how the lip sync was generated.

Speech-to-Text

The server also provides a speech-to-text API for the Creature Listener. The listener can send raw audio to the server and get back a transcript, which is much faster than running whisper.cpp locally on a Pi 5. The server uses the same whisper.cpp instance it uses for lip sync, protected by a mutex since whisper isn’t reentrant.

This was a natural addition since the server already had whisper.cpp loaded for lip sync work. Rather than have the Pi struggle through transcription, it just ships the audio over the network and gets back text in a fraction of the time.

Streaming Sessions

The most complex piece of the server is the streaming ad-hoc session pipeline. This is what makes real-time conversation possible — the Creature Listener and Creature Agent both use it.

Where single-shot ad-hoc animations wait for the entire text to be ready before starting, streaming sessions process text sentence by sentence as it arrives from an LLM. This is the difference between “type something and wait a few seconds” and “have a conversation in real time.”

A streaming session works like this:

- Start — The client opens a session, and the server loads the creature’s config, a base animation for body movement, and the CMU dictionary.

- Add text — Each sentence arrives as it’s generated by the LLM. The server immediately kicks off an async task that calls ElevenLabs for TTS (with request ID chaining for prosody continuity between sentences), generates lip sync data from the alignment timestamps, blends the mouth movements into the base animation, and saves it to the database.

- Pipelined playback — A background thread monitors the async tasks and starts playing each sentence’s animation as soon as it’s ready. The first sentence interrupts whatever the creature is currently doing, and subsequent sentences queue up seamlessly.

- Finish — The client signals that no more sentences are coming, and the playback thread drains the queue.

The key insight is that everything is pipelined. While the LLM is still generating sentence 3, the server might be doing TTS on sentence 2 and already playing sentence 1 on the creature. Frame offsets are chained between sentences using promise/future pairs so the body animation is seamless across sentence boundaries. This is what gets the time-to-first-word down to about 2 seconds instead of waiting for the entire response to be generated. 💜

Legacy Stuff

The current version of the Creature Server is running on some beefy hardware located on my network. As my use of animatronics grows, and the amount of processing I ask the server to do, it’s grown beyond what can run on a Pi comfortably.

When it was running on a Pi, I made a cute hat for a Pi running the server! The LEDs are connected via GPIO pins on the server, and are triggered via events in the event loop. (Turning them on and off is scheduled like any other event.)